jkirker

Verified User

- Joined

- Nov 22, 2012

- Messages

- 126

I'm in a world of hurt backing up to S3 bucket w/ FTP and I need help.

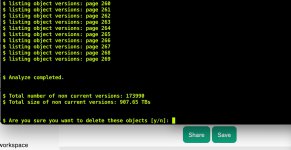

I have several accounts whose backups are multiple gigs. The problem using FTP and S3 is that FTP sends files in 100M increments. So a 2 gig file is sent as 20 separate files that grow incrementally, especially when versioning is on.

ie: File 1 is 100M

File 2 is 200M

File 3 is 300M and so on.

The end result? a 2G file ends up taking 21G of storage - unless the chunk files are deleted.

So this month and for the next 90 days I'm facing hundreds of dollars in fees until the files I find are deleted and have been deleted for 90 days. This issue ultimately will cost me several thousand.

Why is there no backup to s3 (or s3 compatible) systems using the S3 API? And where can I increase the FTP chunk size in an attempt to mitigate my losses.

Thanks,

John

I have several accounts whose backups are multiple gigs. The problem using FTP and S3 is that FTP sends files in 100M increments. So a 2 gig file is sent as 20 separate files that grow incrementally, especially when versioning is on.

ie: File 1 is 100M

File 2 is 200M

File 3 is 300M and so on.

The end result? a 2G file ends up taking 21G of storage - unless the chunk files are deleted.

So this month and for the next 90 days I'm facing hundreds of dollars in fees until the files I find are deleted and have been deleted for 90 days. This issue ultimately will cost me several thousand.

Why is there no backup to s3 (or s3 compatible) systems using the S3 API? And where can I increase the FTP chunk size in an attempt to mitigate my losses.

Thanks,

John